Psychological Exploitation of Social Engineering Attacks

Author: Christina Lekati (about Christina)

"Our human perimeter? It is fine! Everyone goes through cyber security training on a regular basis! How regular? Oh … once per year … yes, it is a 60 minute long training with some cyber hygiene rules. Oh yes, and social engineering. There is everything about those phishing and vishing attacks in there too. We are doing really well."

These conversations are often being held within the safe space of corporate meeting rooms. But at the same time somewhere else, in underground online forums, some other conversations are taking place:

“This is my vishing script with a full walkthrough. The story works 7/10 times. There is a higher rate of success in small/medium companies with fewer resources for cybersecurity”. “Focus the ransomware on those with cyber insurance. They are less careful, and we will be paid quickly.” “Quality over quantity. Let’s look into their vulnerabilities”. “Their sales department is totally exhausted. Hit them first”.

In an almost ironic fashion, on Monday morning, when the security professionals go to work, the social engineers and the cyber attackers have already been at work. The security professionals start their day by catching up with the news, answering emails, and going through their to-do list to ensure that they keep working on strengthening every bit of their technical, physical and human perimeters. Attackers work 7 days a week, looking for that one gap in the organizational security that will let them in.

There is no doubt, cyber criminals often have an asymmetric advantage. They seek to find only one exploitable vulnerability. At the same time, cybersecurity professionals are keeping a company secure on as many levels as possible, are fighting for better budget and executive and employee buy-in, are making hard decisions and prioritize certain security issues over others.

Cyber security professionals today keep investing heavily on building stronger technological barriers to cyber-attacks. But according to most of the threat reports being published within the past few years, cyber attackers today prefer attacking humans rather than technology, because it is simpler. While the security technology keeps advancing and security systems become stronger and more complicated to compromise, human psychology has remained the same over centuries and is easier to predict and to exploit. It is a low-cost, low-risk, and high-reward approach for cyber threat actors. Attackers have the choice to brute force their target organization’s passwords, or to use psychology to brute force an unsuspecting employee’s mental processes to get the passwords and other credentials.

It also makes sense: Employees that have access to critical assets of an organization, become targets of social engineering. The objective of these attacks is to make employees provide access to systems, assets, or sensitive information. Employees that have access to technology and organizational assets are also responsible for the protection of these assets. But are they fit and proper to handle this responsibility? Do they have the awareness and skills necessary to meet these expectations and protect their assets from threat actors and social engineering attacks?

A major challenge in today's cyber security efforts is the lack of effective information security awareness and training. Many organizations and companies of the public and the private sector continue to believe that cyber security is only a technical, not a strategic and behavioral discipline. They believe that cyber security involves only the protection of systems from threats like unauthorized access, not the awareness and training of persons that have authorized access to systems, information and organizational assets. Therefore, they invest significantly less in any processes or measures that have to do with the human perimeter of their organization.

That becomes an exploitable organizational vulnerability. It is a blind spot that attackers are more than willing to exploit.

Engineers of Human Behavior

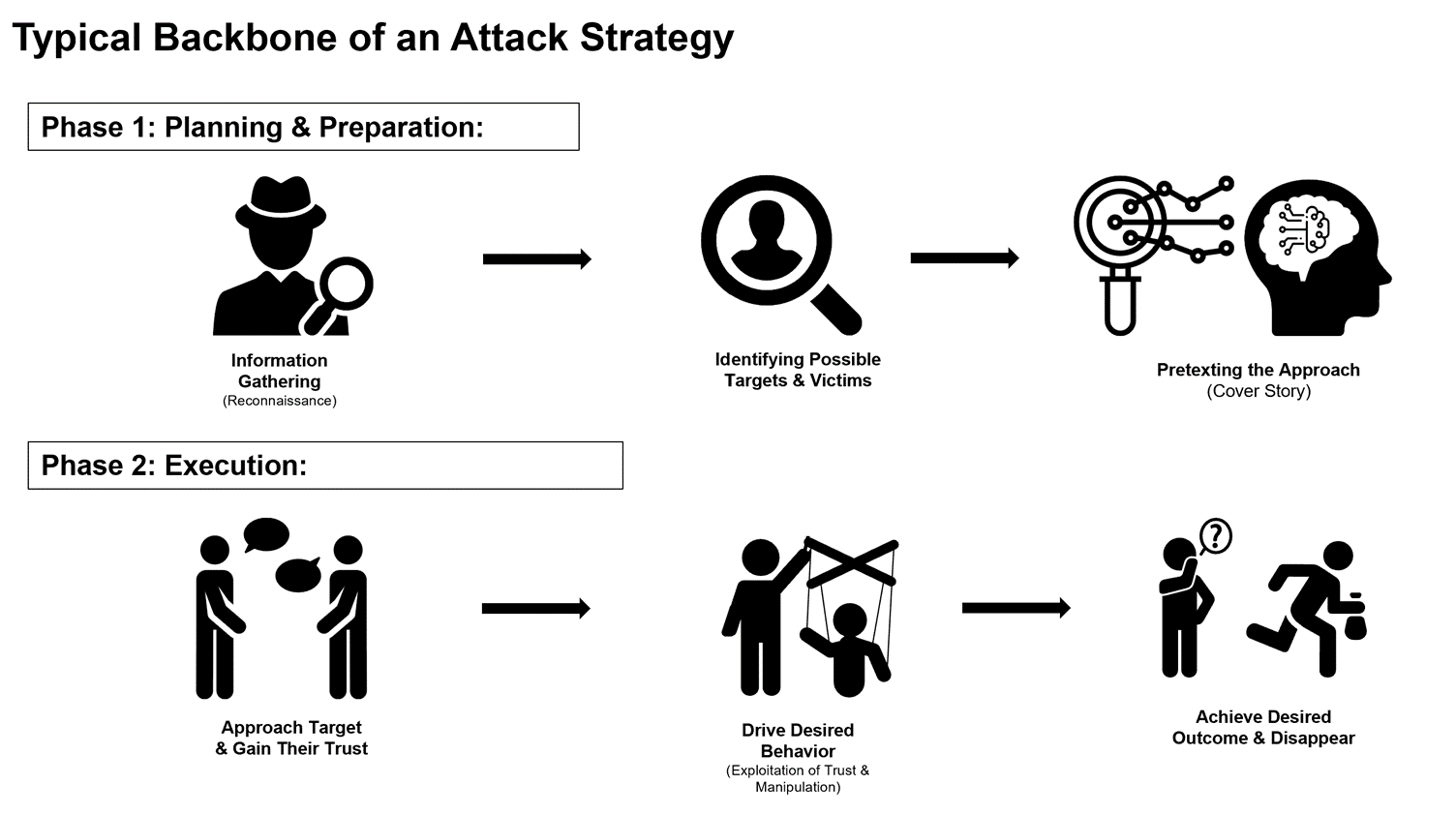

Social Engineering is based almost entirely on exploiting human psychology. Adversaries use weaponized psychology and utilize deception techniques and manipulation to exploit the human hard-wired, decision-making mechanisms that drive behaviour and motivation. The stimulus-response effect in human vulnerabilities is consistent, and exploiting these vulnerabilities is consistently successful. But before we discuss the psychological exploitation, it is important to first shed some light into the skillset and the methodology of social engineers.

Social engineers are often great students and observers of human behaviour. Many are naturally talented in social skills, charming, or simply interested in learning about the human nature and the art of reading people. They practice and learn to recognize what others think or feel through their non-verbal communication (such as their body language or tone of voice). This is very important, as they learn to navigate a conversation and lead their targets by continuously observing their reactions and adjusting their own approach.

Are their targets sounding hesitant or suspecting when engaging with them? If yes, then they take a step back and try to establish more trust. If this does not succeed, they know that they have to abandon this target because further steps could be risky. If, however, they recognize that they have established enough trust and potentially likability with their target, then they start moving into making riskier and more rewarding for them requests. It is like running a well-crafted algorithm on human behaviour. This means that social engineers craft their deception approach according to the reactions of their targets, and they adjust their strategy on the fly, according to how their targets react throughout the engagement. By adjusting their own behaviour, they “engineer” and guide the behaviour of their targets.

But this is not all. Professional social engineers study the background of the targets before they engage them. This usually happens by collecting pieces of information found in online platforms like social media or blog posts, or simply by observing them from a distance. They study the work routine of their targets, their actions, words, and anything else that can give them insights into their daily lives, thoughts, needs, and overall behavioural patterns. By having these insights, they tailor their approach in a manner that fits their targets. The goal is to get them on a hook and establish trust within the first few moments of their interaction. This trust advantage will later be essential for the rest of the attack process.

The end goal of social engineers is to make their victims wilfully provide sensitive information or access to company systems and assets. In most scenarios, the victims help the undercover social engineers, because they believe that they will have a benefit, or simply because they like interacting with them and don’t consider that they are putting their organizations at risk.

Social engineering is an art and a science combining both technical, but mostly behavioural skills. Within the rest of the article, we will discuss some of the very common social engineering approaches along with the analysis of the psychological exploitation that comes into play for a successful attack. However, while there are some common approaches or scenarios, the potential social engineering methodologies and scenarios can be endless and depend on the attackers’ imagination and capability.

Programming The Trust Algorithm

No social engineering attacks would be possible if the attackers were not able to first build trust with their targets. Whether the attack vector is emails, the phone, or a personal interaction, the victims need to first believe the cover story delivered by the attackers. Only then they will take the next steps that the social engineers indicate that eventually lead to an effective compromise.

But how can someone program the trust process of another individual? Is it even possible to engineer a trust relationship? The answer is yes. In fact, the field of psychology teaches us that there are multiple ways in which you can establish trust with someone. Unfortunately, the rules of trust are the same in both honest relationships and deceptive ones. This is also the reason why it is often hard to differentiate the one from the other when encountering an individual or a communication request (eg. email or phone call).

Let’s take it from the start. All humans are naturally paying attention to a few key elements when encountering someone for the first time. These are some of the mental questions that go through our heads, often without even realising that:

- “I like him/her” (or the opposite)

- “Who is this person?”

- “Why is this person reaching out to me?”

- “Does he / she have authority over me?”

Social engineers know these mental questions, and the fact that we go through this mental checklist within milliseconds of a first interaction. These are the so-called “cognitive filters” we all have in order to evaluate another individual. Social engineers make sure they have good answers to these mental questions, in order to immediately hack-through the walls of our initial suspicion and gain a quick trust advantage. They do that, by heavily relying on psychological principles that all of us are used to operate by.

Therefore, social engineers often tend to use cover stories that tend to include:

Persons or organizations with authority

People tend to assign immediate trust to authoritative figures and not doubt their intention. Social engineers will impersonate company executives, lawyers or technicians. The attackers have already investigated which authoritative figures are suitable for each of their victims.

Social Proof

People are more willing to do something or trust a situation or interpersonal dynamic when they observe other people doing it first. They also put a lot of weight into other people’s endorsements. Psychological studies repeatedly show that we often evaluate a situation based on what other people think of it. Social engineers exploit this principle by name-dropping. They might contact a target by first mentioning that someone else (often with authority over the target) recommended that they must communicate with the target. Or they initiate contact by saying that they are currently replacing another specific person from a partner company the target used to communicate with. The social engineers then can proceed by engaging the targets in conversations with the goal of gathering information, or by sending malicious emails and cashing on the trust they have established with the targets through social proof.

Consistency

This is a highly valued trait in today’s society. People associate consistent behaviours with people that are reliable, intelligent, trustworthy, and other highly praised traits. Due to this social norm, people tend to care a lot about appearing consistent. Once someone has made a choice or has taken a stand, they go through great personal and interpersonal pressure to defend that stance. Social engineers often use this principle to gather sensitive information. Once they have made their targets answer small or innocent requests, they request more valuable information. Most often their targets, since they have already gotten in the habit of answering, feel an inner pressure to keep replying to the questions and self-justifying the reasons they do so, even when they start becoming uncomfortable or suspicious. As the conversation progresses, it also becomes harder to turn down the persons they are interacting with.

Liking

People like people who are similar to them, or who show admiration to them. When people are similar to us, we tend to perceive them as belonging to “our tribe”. Psychological studies have shown that when people appear to be or think like we do, we automatically assign some other psychological characteristics to them. Specifically, we automatically assume that they also share similar backgrounds, way of thinking, and more. Our brains, being highly automated, immediately translate this into trustworthiness. Social engineers invest heavily in building rapport with their targets and work hard to increase their likeability and level of trust.

People prefer to open up and respond positively to requests from people they like. The targets of this approach tend to drop their mental guards rather quickly. The result? They are much more likely to answer questions and provide information, as well as bypass security policies by opening malicious email attachments, or even open physical security doors to the social engineer that is of course, impersonating someone else.

Scarcity

The thought of loosing an attractive opportunity or missing on a “limited availability” offer tends to hold a lot of weight in the human decision making process. Items that are limited or scarce, are frequently perceived as more valuable and more attractive. This creates desire. But it also creates a sense of urgency. Combined, they make people more than willing to take more than a few shortcuts on the critical thinking processes. In marketing, this principle is at full-force when marketers make statements like “this item is selling fast- only 5 left in stock!” or “this is a limited edition item”. In social engineering, we can see this approach in the phishing email scenarios where the targets are promised to “receive 1 of the 15 iPads/iPhones/expensive gadgets available by filling in a survey”, or “rush to claim their reward” (by clicking on a malicious link). We can also see this approach when a social engineer places a vishing call, impersonating someone with authority, and claims that they only have 15 minutes to resolve an issue. This tactic connects with the principle of “time pressure” (below).

Urgency/ Time pressure

Time pressure is a motivating factor connected to the one of scarcity, not in terms of making an action desirable, but in terms of giving someone a very short amount of time to fulfil a request. The time pressure is often big enough to make one skip essential critical thinking and analytical processes while acting on a request. Social engineers benefit a lot by making their targets skip any real thinking processes. Ideally, they want their targets to operate without thinking at all. Therefore, plenty of the social engineering scenarios (pretexts) involve the factor of time pressure or other tactics that block our critical thinking. These attacks may come in the form of spear phishing emails impersonating executives claiming that they want an immediate transfer of funds to a specific account, while adding that the matter is time-sensitive. Or it may come in the form of a vishing call from social engineers impersonating technicians, stating that they need to run a few remote updates within the next hour and therefore would need the login credentials of employees, or other sensitive information.

These are only some of the psychological principles that social engineers repeatedly try to exploit. They often combine them, to increase their level of influence.

Incorporating Psychological Pressure into Attack Scenarios

Attackers keep using old and tried cover stories that have proven to be consistently successful, because these stories involve the exploitation of the psychological principles mentioned above. One common attack scenario is the vishing (phone-based) attack, where the attackers call employees and pretend to be IT support staffers. Then they proceed to explain their cover story: For example, they may say that they are running some critical system upgrades and that they need an employee’s username and password in order to proceed. Or they may say that they have developed a new online communication platform for employees and ask them to register for it through a phishing email that they consecutively send, containing a malicious link. They utilize the principle of authority over the target’s technical infrastructure and may include a component of urgency by notifying their targets that their update needs to happen immediately. Although this social engineering scenario has been known for years, attackers keep using it and unfortunately, it still works.

Users can recognize an attack from certain red flags. For example, many social engineering pretexts use the combination of fear and time pressure to push an employee towards immediate action - this is an immediate red flag. Also, no matter what the cover story is, most attacks boil down to specific requests that should make an employee suspicious. For example, requests for sensitive information, or requests to click a link. Employees need to learn how to recognize the red flags, how to respond to them, and how to verify the contact person in a business-appropriate manner. This happens through training.

Human Zero-day Exploits

Although many social engineering techniques or scenarios are known, there are many that are not. Some of them are often associated with higher exploitation potential for high value targets. Social engineers thoroughly investigate their targets and can adopt an approach that is tailored to each specific target, in ways that it is difficult to detect the attack. The high value targets are often members of the C-Suite, executives, or people with important access privileges.

When determined social engineers target more difficult, yet rewarding targets, they put in the time and effort to analyse these specific targets as well as possible. They may seek to find their routines, interests, habits, physical locations, etc. But what they are most interested in, are their psychological characteristics, vulnerabilities and overall psychological profiles. Threat actors have a deep knowledge of psychology, have better resources and capabilities, and often belong to an organized group. They utilize psychological profiles they craft by seeking to identify and exploit personal characteristics of the targets they seek to victimize.

They will find a way to physically or virtually approach their victims with tempting personas and build a relationship with them. Alternatively, they may choose to blackmail their victims, or recruiting them. These types of attacks are based on personal human vulnerabilities of the targets that even the victims themselves might not be aware of, and even more certainly so, the security teams that work in their organization. These are the Human Zero-day exploits. For persons that have priviledges and access rights critical for an organization, specialized security experts must come in to conduct a personal vulnerability assessment, and proceed with personalized training based on the assessment.

Epilogue

We cannot fully protect employees against social engineering attacks. What we can do, is teach them how to protect themselves and their organizations. The most exploitable factor in social engineering is ignorance. A person that does not know the tactics and methods used from social engineers, is defenceless against them.

Social engineers target our employees directly and seek to have an encounter with them. Sooner or later some of our employees will get targeted again by attackers. When this occurs, they will have to:

a) Identify that this is an attempt for a social engineering attack,

b) Respond to the attack by gracefully disengaging,

c) Report it to their organization (given that a reporting mechanism is in place).

They must understand that security is a shared responsibility, and that they play a significant role in it. At the same time, security professionals must understand that this is a growing threat and one that will keep being exploited, unless we build up more knowledge and skills within our organization to combat these attacks. This effort must be supported by a good cybersecurity culture. While attackers develop their skillset and invest time, resources and strategic thinking, it is not enough for us to simply inform employees about the threats. We must build their skillset in recognizing and defending against them, which can only be done with targeted employee trainings. Ideally in person, and more than once per year for 60 minutes.

You may visit:

https://www.cyber-risk-gmbh.com/2_Social_Engineering_Awareness_Defence.html

https://www.cyber-risk-gmbh.com/3_Practical_Social_Engineering.html

Cyber Security Training

Cyber security is ofter boring for employees. We can make it exciting.